Over the last decade, Meta has invested billions to figure out what the next big thing will be. Whether it’s a VR headset, AR glasses, or an AI companion, Meta has been working on it, and Caitlin Kalinowski has almost certainly been at the center of it.

AND THURSDAY

In his weekly column, Nick Sutrich, Senior Content Producer at Android Central, covers all things VR, from new hardware to new games to upcoming technologies and much more.

I had the opportunity to chat with Kalinowski—CK, as she likes to call herself—who previously led product design and integration for the Oculus Rift, Oculus Go, and Oculus Quest headset lines. She has two decades of experience in product design, including the original Unibody MacBook Pro team and all Oculus hardware products released since the original Touch controllers for the Oculus Rift.

But while those skills are impressive, someone like Kalinowski is always looking for the next big thing. Now she’s working on Project Nazare, Meta’s first true AR glasses that will redefine how we think about smart glasses.

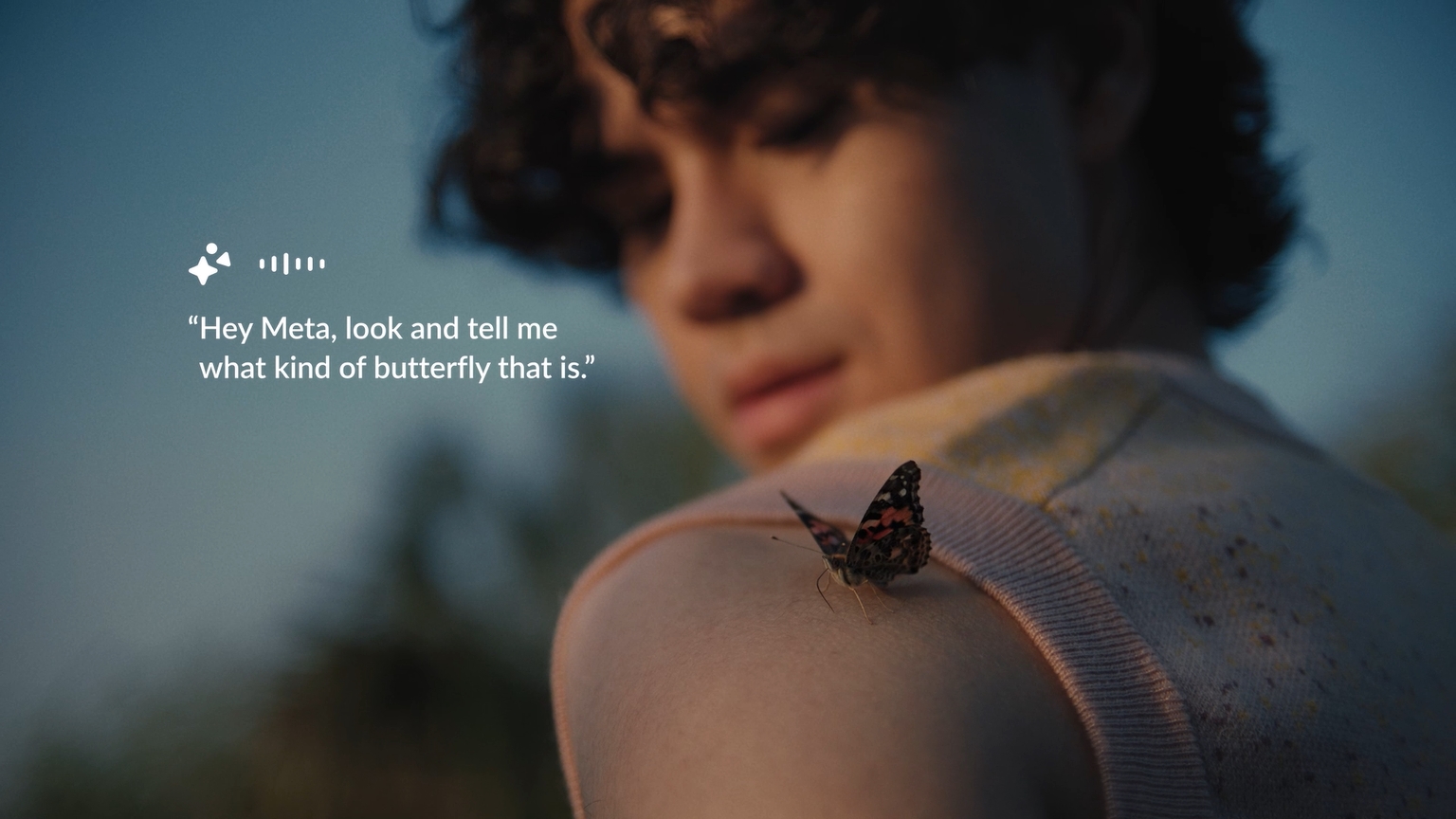

And while the Ray-Ban Meta smart glasses have been a huge success, Kalinowski tells me that her team’s work far exceeds the expectations placed on existing products. Yes, that even includes the new video calling and AI features that were just launched for Ray-Ban Metas. They’re so impressive that she told me she got “the same level of ‘Oh my God, WOW! I can not believe that!’ “convey” that the original “Rift” was for them.

Make it wearable

Six years ago, Meta relied entirely on the standalone concept when introducing the Oculus Quest. Since then, we’ve seen two new generations of headsets, culminating recently with the launch of Meta Horizon OS, Meta’s own Android for VR. Kalinowski told me that there was “a big debate internally early on” about whether to focus on wired headsets like the Rift or go completely standalone with the Quest concept.

But long after the dust settles on these conversations, Meta’s next big product is expected to have the same level of “Oh my god, wow!” I can’t believe this!” that the original Rift had.

In my experience, most people who try out a modern VR headset are usually immediately impressed. It’s one of those experiences that’s hard to put into words unless you’ve tried it yourself, the way virtual becomes actually Reality for your eyes and your mind.

Kalinowski told me that she’s passionate about “giving customers a new experience they’ve never had before,” something that Project Nazare can deliver if what I’m told applies to the final product.

Kalinowski says she is passionate about “giving customers a new experience they’ve never had before.”

“The next big technology I’m working on right now is full AR glasses,” she says. That doesn’t just mean glasses that can mirror your smartphone, or something like Ray-Ban Metas that can take pictures or play music, but one that completely expands the world you see through projected images.

Meta is working on a version of this for Quest 3 headsets this fall – a feature appropriately called “Augments” – but it should be compared to what proper AR glasses can do due to the reduction in quality that comes with the passthrough -Visibility accompanies fading a VR headset.

Kalinowski explained: “Customers will be able to see both the original real-world photons and the desired overlay.” In plain English, this means that your view of the real world through these glasses will be no different than the one you see through them would see sunglasses. The light is not passed through cameras and then re-projected in “passthrough” mode like in Meta Quest 3.

“Nothing prepares you for the high field of view immersion” from Project Nazare.

Instead – based on previous spec leaks from Project Nazare – the micro-OLED projectors convincingly overlay virtual objects onto the world you see. However, current AR glasses that attempt this, like Snap Spectacles 4 or Magic Leap 2, have an extremely small field of view. This means the virtual object overlay is only in a tiny square in your field of view, instantly breaking the immersion.

As Kalinowski notes, “Nothing prepares you for the high field-of-view immersion” of Project Nazare. In other words, they’re the game-changing AR glasses we need, offering an immersive experience similar to a VR headset while maintaining a truly comfortable, portable form factor.

AI and availability

Throughout the interview, Kalinowski was careful not to reveal too much about products like Project Nazare “until they’re ready.” There’s an important expectation to set, and Meta can’t afford to release anything too soon, especially if the company plans to present it as the “iPhone moment” that CEO Mark Zuckerberg reportedly wants.

But the excitement in Kalinowski’s voice is unmistakable. The company has clearly made a breakthrough in several key areas and is undoubtedly closer to realization than perhaps any other company before it.

Some of the big breakthroughs have come from recent advances in AI, but it’s not just generative AI like ChatGPT that you might think of when you hear the term. The company was able to shrink its camera sensors thanks to AI that can denoise images in real time. This creates more space for things like processors and batteries.

There’s an important expectation to set, and Meta can’t afford to release anything too soon, especially if the company plans to present it as the “iPhone moment” that CEO Mark Zuckerberg reportedly wants.

In addition, Kalinowski said AI can now be used to further improve SLAM – short for Simultaneous Location and Mapping – so that smart robot vacuum cleaners, for example, know where they are in your home. In this way, AI can be used to compress important data so that it can be processed faster, reducing the power requirements of the glasses.

Perhaps even more exciting, she admitted that “we don’t even know what they’re all going to be yet” when it comes to the ways in which AI can virtually boost the processing power and capabilities of low-power devices like AR glasses .

“We looked at what was happening in AI, consciously looked at our roadmap, and made changes to benefit from this AI revolution.” In other words, the way we envisioned AR glasses is completely different now that modern AI systems exist. “The exciting thing is that AI is changing every week,” she adds, which would certainly make developing a product as large as Project Nazare challenging.

“We looked at what was happening in AI, consciously looked at our roadmap, and made changes to capitalize on this AI revolution.”

Without using examples that might reveal too much, Kalinowski described a scenario that would help illustrate AI’s visual learning capabilities and how it can begin to understand contexts in surprising ways. The AI could “understand that you’re skateboarding” and offer different contextual ways to provide input, or even help quickly record a video when you do an awesome trick – the last part is my own example based on the conversation .

But as Kalinowski points out, sometimes we have to wait and see what will happen, even when we have all the technology. “When GPS first came out, few would have imagined that it would be used to call an Uber from anywhere,” she noted. It’s the clever combination of hardware and software ideas that really gives these products that “must-have” feel.

The success of Ray-Ban Metas is “unusually great,” which bodes well for future AR glasses that can do even more.

Realistically, however, this still means consumers probably won’t get their hands (or heads) on Meta’s breakthrough AR glasses for another three years. Ray-Ban Metas’ success has been “unusually great,” as Kalinowski said, and the product appears to have caught on just as well as the Oculus Quest 2 did when it launched in 2020.

These strong sales figures bode well for future AR glasses that can do even more and still look good. Ray-Ban Metas’ AI component was “originally not even core” to the product, yet it seems to be one of the most interesting reasons to buy a pair and use them daily.

As Meta continues to push the “open ecosystem” mantra, openness and other areas of transparency will ultimately help gain the trust needed for people to use real AR glasses every day. It’s an important piece of the puzzle that shouldn’t be overlooked, and one that teams like Kalinowski’s seem to think about on a daily basis.

As Meta continues to push the “open ecosystem” mantra, openness and other areas of transparency will ultimately help gain the trust needed for people to use real AR glasses every day.

Kalinowski says her team closely monitored the release of Ray-Ban Meta and incorporated the lessons learned into the next big product. Things like front-facing privacy LEDs and other similar features have made it easier for people to have cameras on (and in) their faces throughout the day.

And while not everyone will be comfortable wearing them all the time, I can definitely imagine a future where most people walk around with some form of reality-augmenting technology on their bodies. Whether this looks more like Ray-Ban Metas or the Humane AI Pin, it’s very likely that the current app-based world we live in will become a thing of the past.

Ray-Ban Meta data glasses

Now put the future in your face with the Ray-Ban Meta Smart Glasses, the AI-powered smart glasses that enable effortless hands-free memory capture and are an intelligent AI companion to help you on your journey.